action #129244

closed[alert][grafana] File systems alert for WebUI /results size:M

0%

Description

Observation¶

Observed at 2023-05-12 17:23:00 +0200 CEST

One of the file systems /results is too full (> 90%)

See http://stats.openqa-monitor.qa.suse.de/d/WebuiDb?orgId=1&viewPanel=74

https://monitor.qa.suse.de/d/WebuiDb/webui-summary?orgId=1&viewPanel=74&from=1683650195801&to=1684141123756 for one recent instance where /results was exceeding the threshold and coming back below the threshold shortly.

Current usage:

Filesystem Size Used Avail Use% Mounted on

/dev/vdd 7.0T 6.3T 782G 90% /results

Acceptance criteria¶

AC1: There is enough space and headroom on the affected file system /results, i.e. considerably more free than 20%

Suggestions¶

- Check used space and evolution over time in https://monitor.qa.suse.de/d/nRDab3Jiz/openqa-jobs-test?orgId=1&viewPanel=19 , in particular check "Development Security" which looks too big in too short a time

- Check job group results retention settings for "not-important" results

- Crosscheck the use of "archiving": openQA should move "important" results to /results/archive on a separate storage device

Files

Updated by okurz about 2 years ago

- Subject changed from [alert][grafana] File systems alert for WebUI to [alert][grafana] File systems alert for WebUI /results

- Description updated (diff)

https://monitor.qa.suse.de/d/WebuiDb/webui-summary?orgId=1&viewPanel=74&from=1683650195801&to=1684141123756 shows how recently /results was exceeding the threshold and then coming back automatically below the threshold. Current usage:

Filesystem Size Used Avail Use% Mounted on

/dev/vdd 7.0T 6.3T 782G 90% /results

Updated by okurz about 2 years ago

- Subject changed from [alert][grafana] File systems alert for WebUI /results to [alert][grafana] File systems alert for WebUI /results size:M

- Description updated (diff)

- Status changed from New to Workable

Updated by mkittler about 2 years ago

- File screenshot_20230515_152744.png screenshot_20230515_152744.png added

- File screenshot_20230515_153751.png screenshot_20230515_153751.png added

- Status changed from Workable to In Progress

The cleanup generally works. The archiving also generally still works (as shown by select id , t_created from jobs where archived = true order by id desc limit 30;).

I was going through the groups to determine culprits. I was at

- https://openqa.suse.de/admin/job_templates/268

- https://openqa.suse.de/admin/job_templates/168

- https://openqa.suse.de/admin/job_templates/143

- https://openqa.suse.de/admin/job_templates/265

When I realized that many groups seem to have increased a lot in the last 12 days. Then I realized that "a lot" is actually only a slight increase. We were fooled by Grafana changing the Y-axis, see https://gitlab.suse.de/openqa/salt-states-openqa/-/merge_requests/859.

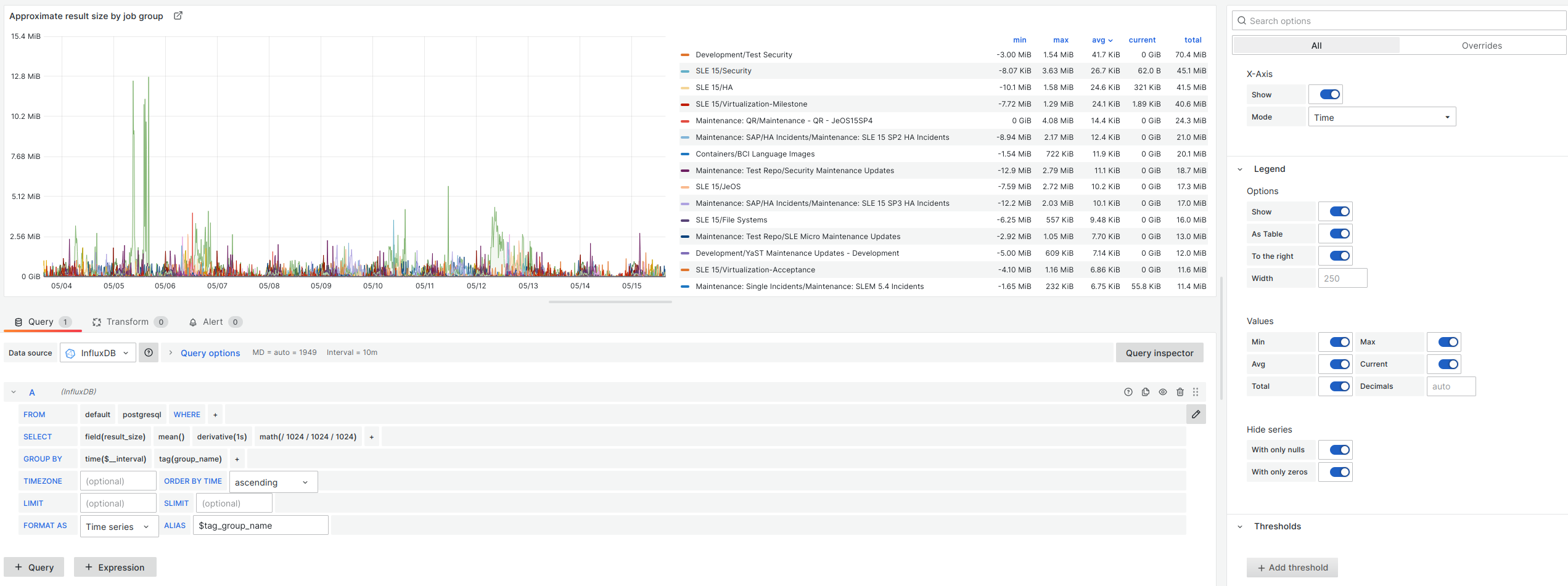

To find out which job groups had the biggest increase within the last 12 days for real I have changed the graph to compute the derivative and to display the total in the legend and sorted by that decadently. So I suppose the legend now shows the culprits for real:

This means that the Test Security is still increasing more than the others, then comes Security, HA and Virtualization-Milestones. I suppose I'll start by decreasing the retention of Test Security - although it would make sense if someone could double-check my use of Grafana here.

Updated by mkittler about 2 years ago

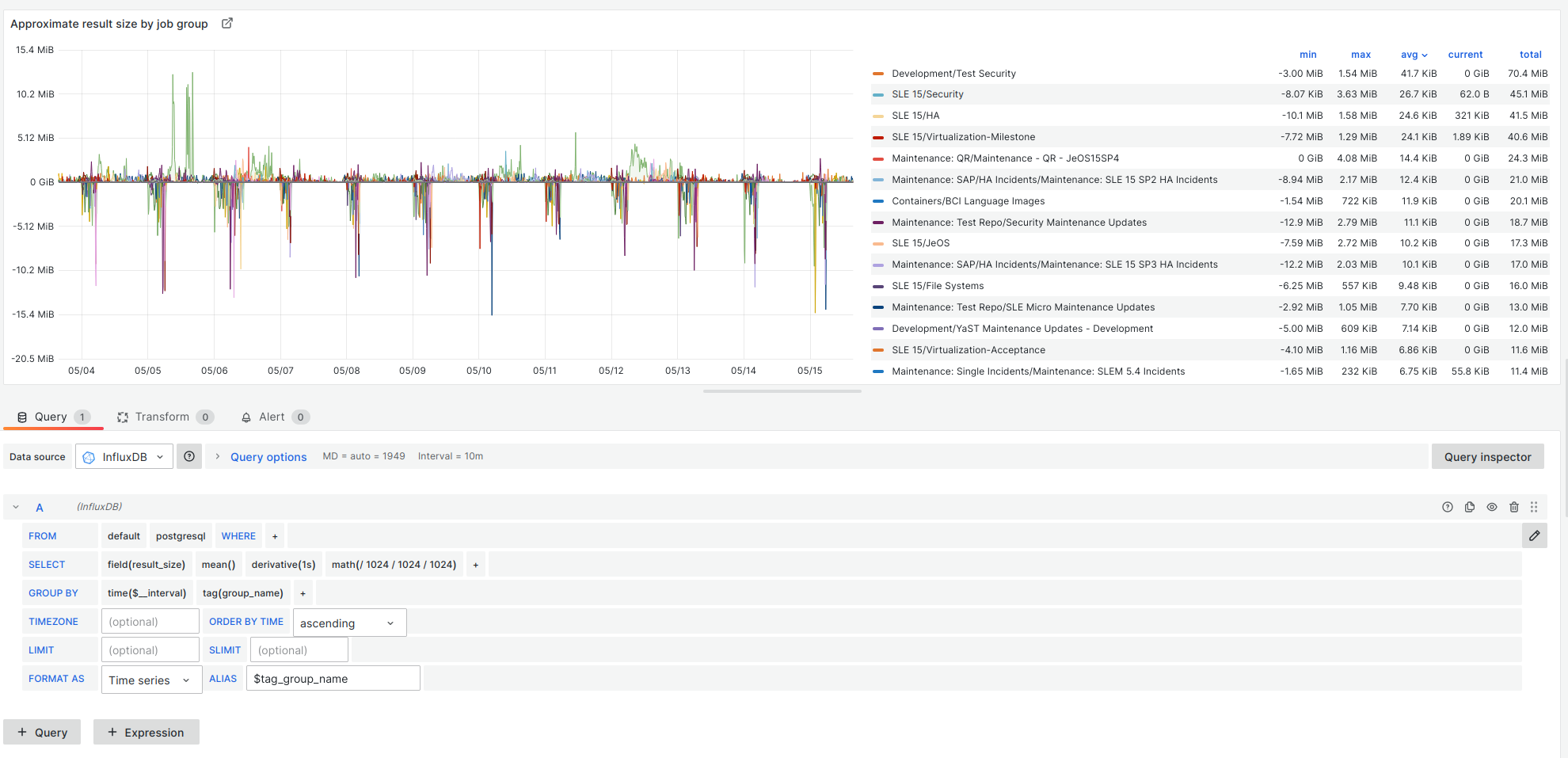

Note that the lack of negative values is just because I had the min. of the Y-axis still at 0. It actually looks like this:

So the size is the increase/decrease within one second and the total simply the sum of these over the course of 12 days (when I interpret what Grafana/InfluxDB do here correctly).

I have meanwhile saved that variant of the panel under https://stats.openqa-monitor.qa.suse.de/d/b9298213-c3fa-4771-a409-c7f3a7d6c366/marius-test-dashbaord?orgId=1.

Updated by mkittler about 2 years ago

Since I've got an ok in #discuss-qe-security I've reduced the retention of non-important logs and results from 80 days to 60 days on https://openqa.suse.de/admin/job_templates/168.

Updated by okurz about 2 years ago

I merged https://gitlab.suse.de/openqa/salt-states-openqa/-/merge_requests/859

I suggest you also reduce the limits for https://openqa.suse.de/admin/job_templates/268 "Security" as 120/200 is IMHO too high especially when comparing to other job groups. When you ask the related squad you can suggest that "important" results can stay on 500/0 and also should be used more if more results are "important". I suggest you apply similar reasoning for other groups even though they don't seem to use such excessive limits.

Updated by openqa_review about 2 years ago

- Due date set to 2023-05-30

Setting due date based on mean cycle time of SUSE QE Tools

Updated by mkittler about 2 years ago

- Status changed from In Progress to Feedback

Changing the retention for "Development / Test Security" was very effective. The group now even has a slight negative growth (within the last 20 days, see https://stats.openqa-monitor.qa.suse.de/d/b9298213-c3fa-4771-a409-c7f3a7d6c366/marius-test-dashbaord?orgId=1&from=now-20d&to=now). This also means we are now back below the alert threshold.

I agree with your previous comment. So I have reduced the retention in "Security" slightly from 120/200 to 100/180. I wouldn't touch the other groups for now as they already have much lower retention anyways.

Updated by okurz about 2 years ago

Our space aware cleanup should bring us back to 80% of storage usage, isn't it? https://monitor.qa.suse.de/d/WebuiDb/webui-summary?orgId=1&viewPanel=74&from=1678311461704&to=1684235193102 shows to me that we had been fine until 2023-05-02 and then we went from 80% to 90%.

Updated by okurz about 2 years ago

- Related to action #129412: Verify cleanup behavior of groupless job results added

Updated by mkittler about 2 years ago

Lowering the retention (see #129244#note-10) had no further effect after running the cleanup. So I've lowered https://openqa.suse.de/admin/job_templates/268 further to 80/160 (still way more than https://openqa.suse.de/admin/job_templates/143 has) and triggered the cleanup again.

Updated by mkittler about 2 years ago

This was effective so the Security group is no longer the top culprit (moved down quite a lot actually). However, we're not back around the 80 %.

I guess I'll have to reduce retentions further beginning with groups on top of https://stats.openqa-monitor.qa.suse.de/d/b9298213-c3fa-4771-a409-c7f3a7d6c366/marius-test-dashbaord?orgId=1&from=now-30d&to=now. Before that I'd actually like to sort out the situation of groupless jobs. Maybe there's a real problem there and we could already gain a lot there (avoiding having to reduce retentions further).

Updated by mkittler about 2 years ago

The cleanup of groupless jobs works, see #129412#note-7. The graph https://stats.openqa-monitor.qa.suse.de/d/nRDab3Jiz/openqa-jobs-test?orgId=1&viewPanel=19&from=1684162373491&to=1684320055535 also shows that it definitely works in production and at this point "groupless" is not the biggest group anymore.

So I guess I should reduce the retention of:

- groups with the biggest growth since the last 30 days:

-

https://openqa.suse.de/admin/job_templates/264from 90/90 to 60/75 after deemed acceptable in #129523#note-14 - https://openqa.suse.de/admin/job_templates/143 - it already has quite low retentions and it is strange that e.g. https://openqa.suse.de/tests/5748792 hasn't been cleaned up yet (or at least archived)

-

https://openqa.suse.de/admin/job_templates/168done via #129244#note-7

-

- possibly groupless jobs, see #129412#note-8

Updated by mkittler about 2 years ago

About https://openqa.suse.de/admin/job_templates/143 and why jobs like https://openqa.suse.de/tests/5748792 have not been cleaned up or archived:

TLDR: The job is an important one and thus according to the configured retention supposed to stay forever. The archiving feature is only about logs and not the entire job result. The logs have actually been already cleaned up as expected. Thus there's nothing left to be archived.

The following builds are important:

martchus@openqa:~> sudo -u geekotest /usr/share/openqa/script/openqa eval -V 'app->schema->resultset("JobGroups")->find(143)->important_builds'

[info] Loading external plugin AMQP

[info] Loading external plugin ObsRsync

[

"100.1",

"101.1",

"117.1",

"124.5",

"126.1",

"137.1",

"142.3",

"15.2",

"151.1",

"156.3",

"162.1",

"162.7",

"164.1",

"168.1",

"172.3",

"178.1",

"181.4",

"187.1",

"19.1",

"190.3",

"195.1",

"202.6",

"209.2",

"21.1",

"213.2",

"227.1",

"228.1",

"228.2",

"24.1",

"25.1",

"29.1",

"32.1",

"38.1",

"58.1",

"61.1",

"63.1",

"64.17",

"66.1",

"72.4",

"79.1",

"80.5",

"88.4",

"89.1",

"9.1",

"93.2"

]

So https://openqa.suse.de/tests/5748792 is actually an important job which explains why it hasn't been cleaned up yet. It also hasn't been archived yet because:

- The job is exceeding the retention for important logs which subsequently have in fact been removed (and not preserved).

- The job is not exceeding the retention for important results. That's why it is still there at all.

The archiving feature's description in openqa.ini says: "Moves logs of jobs which are preserved during the cleanup because they are considered important to …". As stated in 1. the logs of that job have not been preserved. So it simply doesn't fall into the to-be-archived category as its logs are gone anyways. The code of the archiving feature is also in accordance with that description; is suppose that's really what happens.

Note that also the "Moves logs of …" part of the description is very important. It means that the archiving feature is only about logs. This makes also sense because screenshots might be shared between jobs and are thus excluded from archiving. The job's data in the database can of course also not be archived.

It may look a bit weird that the worker log and vars.json are actually still present. However, I suppose that's not a bug but a feature. Likely the idea is that those two files are usually not big anyways. Maybe it makes nevertheless sense to have at least the worker log deleted as well for the sake of consistency.

Updated by acarvajal about 2 years ago

mkittler wrote:

About https://openqa.suse.de/admin/job_templates/143 and why jobs like https://openqa.suse.de/tests/5748792 have not been cleaned up or archived:

TLDR: The job is an important one and thus according to the configured retention supposed to stay forever. The archiving feature is only about logs and not the entire job result. The logs have actually been already cleaned up as expected. Thus there's nothing left to be archived.

But is it OK that the job belongs to 15-SP3 build 168.1 (https://openqa.suse.de/tests/overview?version=15-SP3&build=168.1&distri=sle&groupid=143) which is not marked as important as far as I understand, and not to 15-SP2 build 168.1 which is? (https://openqa.suse.de/tests/overview?distri=sle&version=15-SP2&build=168.1&groupid=143 - 15-SP2 build 168.1 is labeled as RC2, so important)

Updated by mkittler about 2 years ago

According to our current "definition" how build tagging works that is at least expected. However, it surely seems inefficient and we should likely consider the version here as well. That is likely also not very hard to implement.

By the way, your observation can easily be backed up by the concrete output of

martchus@openqa:~> sudo -u geekotest /usr/share/openqa/script/openqa eval -V 'app->schema->resultset("JobGroups")->find(143)->tags'

[info] Loading external plugin AMQP

[info] Loading external plugin ObsRsync

{

"15-SP1-190.3" => {

"build" => "190.3",

"description" => "RC1",

"type" => "important",

"version" => "15-SP1"

},

"15-SP1-213.2" => {

"build" => "213.2",

"description" => "RC2",

"type" => "important",

"version" => "15-SP1"

},

"15-SP1-227.1" => {

"build" => "227.1",

"description" => "GMC",

"type" => "important",

"version" => "15-SP1"

},

"15-SP1-228.1" => {

"build" => "228.1",

"description" => "GMC2",

"type" => "important",

"version" => "15-SP1"

},

"15-SP1-228.2" => {

"build" => "228.2",

"description" => "GMC3",

"type" => "important",

"version" => "15-SP1"

},

"15-SP2-126.1" => {

"build" => "126.1",

"description" => "Beta2",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-162.1" => {

"build" => "162.1",

"description" => "RC1",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-164.1" => {

"build" => "164.1",

"description" => "Snapshot9",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-168.1" => {

"build" => "168.1",

"description" => "RC2",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-181.4" => {

"build" => "181.4",

"description" => "PublicRC1",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-195.1" => {

"build" => "195.1",

"description" => "PublicRC2",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-202.6" => {

"build" => "202.6",

"description" => "RC3",

"type" => "important",

"version" => "15-SP2"

},

"15-SP2-209.2" => {

"build" => "209.2",

"description" => "GMC1",

"type" => "important",

"version" => "15-SP2"

},

"15-SP3-100.1" => {

"build" => "100.1",

"description" => "Beta2",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-124.5" => {

"build" => "124.5",

"description" => "Beta3",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-142.3" => {

"build" => "142.3",

"description" => "Beta4",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-156.3" => {

"build" => "156.3",

"description" => "PublicBeta",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-162.7" => {

"build" => "162.7",

"description" => "RC1",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-172.3" => {

"build" => "172.3",

"description" => "RC2",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-178.1" => {

"build" => "178.1",

"description" => "PublicRC",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-187.1" => {

"build" => "187.1",

"description" => "GMC",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-63.1" => {

"build" => "63.1",

"description" => "Alpha4",

"type" => "important",

"version" => "15-SP3"

},

"15-SP3-89.1" => {

"build" => "89.1",

"description" => "Beta1",

"type" => "important",

"version" => "15-SP3"

},

"15-SP4-101.1" => {

"build" => "101.1",

"description" => "PublicBeta-202202",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-117.1" => {

"build" => "117.1",

"description" => "RC1-202203",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-137.1" => {

"build" => "137.1",

"description" => "PublicRC-202204",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-151.1" => {

"build" => "151.1",

"description" => "GMC-202205",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-61.1" => {

"build" => "61.1",

"description" => "Alpha-202111-1",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-64.17" => {

"build" => "64.17",

"description" => "Beta1",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-79.1" => {

"build" => "79.1",

"description" => "Beta2",

"type" => "important",

"version" => "15-SP4"

},

"15-SP4-88.4" => {

"build" => "88.4",

"description" => "Beta3",

"type" => "important",

"version" => "15-SP4"

},

"15-SP5-15.2" => {

"build" => "15.2",

"description" => "Alpha-202208-1",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-19.1" => {

"build" => "19.1",

"description" => "Alpha-202209-1",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-21.1" => {

"build" => "21.1",

"description" => "Alpha-202209-2",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-24.1" => {

"build" => "24.1",

"description" => "Alpha-202209-3",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-25.1" => {

"build" => "25.1",

"description" => "Alpha-202210-1",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-29.1" => {

"build" => "29.1",

"description" => "Alpha-202210-2",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-32.1" => {

"build" => "32.1",

"description" => "Alpha-202210-3",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-38.1" => {

"build" => "38.1",

"description" => "Beta1-202211",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-58.1" => {

"build" => "58.1",

"description" => "Beta2-202212",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-66.1" => {

"build" => "66.1",

"description" => "Beta3-202301",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-72.4" => {

"build" => "72.4",

"description" => "PublicBeta-202302",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-80.5" => {

"build" => "80.5",

"description" => "RC1-20230313",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-9.1" => {

"build" => "9.1",

"description" => "InfraTest1",

"type" => "important",

"version" => "15-SP5"

},

"15-SP5-93.2" => {

"build" => "93.2",

"description" => "PublicRC-202304",

"type" => "important",

"version" => "15-SP5"

}

}

which only has the entry

"15-SP2-168.1" => {

"build" => "168.1",

"description" => "RC2",

"type" => "important",

"version" => "15-SP2"

},

but not a similar entry "15-SP3-168.1" => …. Nevertheless our "build tagging" for marking jobs as important exclusively uses the list of builds mentioned in #1292445#note-17.

Updated by mkittler about 2 years ago

To consider version and build for the cleanup one could just use ( concat(VERSION, ':', BUILD) in ('15-SP2:168.1') ) in the where-clause of the select statement. The hard part is likely just doing this though DBIx. (Luckily we can always resort to using raw SQL queries.)

By the way, this shows that in this concrete example we're preserving 485 jobs needlessly:

openqa=# select count(id), pg_size_pretty(sum(result_size)) from jobs where group_id = 143 and ( concat(VERSION, ':', BUILD) in ('15-SP2:168.1') );

count | pg_size_pretty

-------+----------------

356 | 7276 MB

(1 row)

openqa=# select count(id), pg_size_pretty(sum(result_size)) from jobs where group_id = 143 and ( concat(VERSION, ':', BUILD) in ('15-SP3:168.1') );

count | pg_size_pretty

-------+----------------

485 | 579 MB

(1 row)

Updated by mkittler about 2 years ago

- This PR makes openQA's cleanup consider the tag's version: https://github.com/os-autoinst/openQA/pull/5146

- This PR is going to allow us to set lower retentions for groupless jobs: https://github.com/os-autoinst/openQA/pull/5142

Updated by okurz about 2 years ago

- Related to coordination #68923: [epic] Use external videoencoder in production auto_review:"External encoder not accepting data" added

Updated by okurz about 2 years ago

mkittler wrote:

Note that also the "Moves logs of …" part of the description is very important. It means that the archiving feature is only about logs. This makes also sense because screenshots might be shared between jobs and are thus excluded from archiving.

- Is there anything for an archived job that still stays in /results besides screenshots?

- Can you check if screenshots are becoming a problem for the size on OSD? Are screenshots within /results?

The job's data in the database can of course also not be archived.

That's ok, at least for the current case because the database is not on /results

It may look a bit weird that the worker log and vars.json are actually still present. However, I suppose that's not a bug but a feature. Likely the idea is that those two files are usually not big anyways. Maybe it makes nevertheless sense to have at least the worker log deleted as well for the sake of consistency.

- Yes, I would expect deleting the worker log would help to free some space in some cases. Can you crosscheck that?

Updated by mkittler about 2 years ago

MR for reducing the retention of groupless jobs: https://gitlab.suse.de/openqa/salt-states-openqa/-/merge_requests/864 (as https://github.com/os-autoinst/openQA/pull/5142 has been merged meanwhile)

PR for deleting the worker log as well: https://github.com/os-autoinst/openQA/pull/5148

Updated by mkittler about 2 years ago

- The MR has been merged and deployed and I've just started the result cleanup. Let's see how much it'll gain us.

- The PR has been merged. This will not affect any existing jobs of course.

Updated by mkittler about 2 years ago

- Status changed from Feedback to Resolved

We're currently at 79.5 %. That's good. My changes have contributed to that. However, the final drop to around 80 % only happened gradually and not immediately after the cleanup I triggered 8 days ago. So the drop is likely not only a consequence of my changes. I would nevertheless close this issue for now and reduce more retentions only when we really have.

Updated by nicksinger 10 months ago

- Copied to action #164979: [alert][grafana] File systems alert for WebUI /results size:S added