action #132140

closedcoordination #121720: [saga][epic] Migration to QE setup in PRG2+NUE3 while ensuring availability

coordination #123800: [epic] Provide SUSE QE Tools services running in PRG2 aka. Prg CoLo

Support move of PowerPC machines to PRG2 size:M

0%

Description

Motivation¶

Most PowerPC machines are being move to PRG2 with the help of IBM and BuildOps. We need to support the process and help to bring back the machines to be able to use them as part of openQA as well as non-openQA QE work.

Acceptance criteria¶

- AC1: All PowerPC machines referenced in https://gitlab.suse.de/openqa/salt-pillars-openqa/-/blob/master/openqa/workerconf.sls are usable after the move to PRG2

Suggestions¶

- DONE Follow PowerPC related moving coordination as referenced in #100455#Important-documents

- DONE Wait for Eng-Infra/BuildOPS/Power-owners to inform us about the availability of the network and machines

- DONE Wait for mgriessmeier to come back to us regarding if we will have a HMC or a VM provided by Eng-Infra for us to install (how would we install on a VM if they can't give us access to osd/o3 for years?)

- DONE Ensure we can connect over HMC

- DONE If necessary we can also try to setup our own HMC

- Update HMC details in https://gitlab.suse.de/openqa/salt-pillars-openqa/-/blob/master/openqa/workerconf.sls where necessary

- Ensure we have access to machines manually as well as with verification openQA jobs, both for o3+osd

- Inform users about the result

Rollback actions¶

- DONE

Remove silence "alertname=Queue: State (SUSE) - too few jobs executed alert" https://stats.openqa-monitor.qa.suse.de/alerting/silences

Out of scope¶

- non-openQA machines, see #139109

Files

Updated by okurz about 1 year ago

- Subject changed from Support move of PowerPC machines to PRG2 to Support move of PowerPC machines to PRG2 size:M

- Description updated (diff)

Updated by okurz about 1 year ago

mgriessmeier found http://dist.suse.de/IBM/HMC.power.10.1.1010/ . powerhmc3 is running on the morla virtualization cluster maintained by Eng-Infra. Maybe we can ask to copy that to prg2 or ask eng infra for new VM and then just deploy from the mentioned directory. According to mgriessmeier BuildOps does not need HMC, maybe they have a better solution we can use as well, ask jdsn. Discussed with mgriessmeier, maybe QE Kernel can help to push this forward.

Updated by okurz about 1 year ago

Meeting with jford, mcaj, horon, mgriessmeier, gpfuetzenreuter. We decided into which racks the PowerPC in PRG2 should go. There are two racks planned for LSG QE:

We added the according racks in

https://mysuse.sharepoint.com/:x:/r/sites/DatacentreTransformation/_layouts/15/Doc.aspx?sourcedoc=%7B4B68F941-C7EC-431A-B3CF-875DBBCC6C83%7D&file=2023-01-16_2023Q1_PowerPC_capacity_planning_-_WIP_v0.7.0_jf.xlsx&action=default&mobileredirect=true&DefaultItemOpen=1

For the multiple "QE but not openQA" machines no specific place was planned within the QE/openQA racks so far but we have some space available so we planned all those PowerPC machines to go to PRG2-J11 as well. If there is not enough space those machines can move to other spare racks based on consideration by Eng-Infra

horon provided two links regarding HMC setup that might help:

- https://mysuse-my.sharepoint.com/:b:/r/personal/hector_oron_suse_com/Documents/Microsoft%20Teams%20Chat%20Files/hmc-network-setup-5%201.pdf?csf=1&web=1&e=HNGKlC

- https://mysuse-my.sharepoint.com/:w:/r/personal/hector_oron_suse_com/Documents/Microsoft%20Teams%20Chat%20Files/hmc-setup-requirements%201.odt?d=w41f0660bb678493b92e19345b9f46cbc&csf=1&web=1&e=tdZmns

Updated by okurz about 1 year ago

Trying HMC installation on osiris-1.qe.nue2.suse.org:

cd /var/lib/libvirt/images/hmc

wget -c http://dist.suse.de/IBM/HMC.power.10.1.1010/HMC10.1.1010.0-2108131106-x86_64.KVM.tgz -O - | tar -xzpf -

ln -s /var/lib/libvirt/images/hmc/domain.xml /etc/libvirt/qemu/ibm-hmc-power-10.xml

and multiple adjustments in the machine definition XML file until virsh create /etc/libvirt/qemu/ibm-hmc-power-10.xml succeeded. I then connected using virt-manager and watched the system boot. Eventually an ncurses wizard starts up where you need to accept multiple times, then an X session with a wizard starts. I am setting "susetesting" as password for relevant users and an "okurz" user with my personal password.

After the wizard the X session with internal firefox starts a connection to the usual HMC web interface. The VM has mac 52:54:00:49:88:12 so from walter1.qe.nue2.suse.org I could find the address as 10.168.193.43. I then managed to actually add power8 with the current IPv4 address 10.168.193.46 but the HMC showed something about "version mismatch" which I think we have seen in the past already.

I tried to access the HMC web interface from outside but seems a firewall blocks me. The wizard offered to configure the firewall and I denied assuming then that there would be no firewall but likely there is. I wonder if I can restart the wizard or something.

Updated by okurz about 1 year ago

Played a bit with the HMC together with nicksinger and mkittler. In the local HMC web interface in "Console Management>Console Settings>Change Network Settings>LAN Adapters>Details>Firewall Settings" we clicked "Allow Incoming" for both "Secure Shell" and "Secure Remote Web Access" which allowed remote access. But then we found that the machine is not reachable anymore because somehow no gateway address is set. For now in "Console Management>Console Settings>Change Network Settings>Routing" we set a manual gateway entry with gateway address 10.168.195.254. Now we can login over https://10.168.193.43 remotely. We can't use an FQDN as there are some holes in the DNS config for qe.nue2.suse.org, fixing that with https://gitlab.suse.de/OPS-Service/salt/-/merge_requests/3741

Updated by okurz 11 months ago

I wrote in https://suse.slack.com/archives/C04MDKHQE20/p1693215411497969

@Héctor Orón @Moroni Flores @John Ford @Matthias Griessmeier because there were questions: We clarified that the machine "macbeth" is what we call "grenache" which is not what racktables.nue.suse.com/ knows as "macbeth" which is a different machine. grenache is planned to be moved this week so Matthias with help from others will prepare it for the physical move from NUE1-SRV2. As we still do not have any HMC available from PRG2 for the machines from 1st wave I see a significant impact on PowerPC availability for openQA related testing. Any HMC related work should be expedited accordingly

EDIT: According to DM from mgriessmeier HMC should be ready by tomorrow(?).

Updated by okurz 11 months ago

- Related to action #134132: Bare-metal control openQA worker in NUE2 size:M added

Updated by mgriessmeier 11 months ago

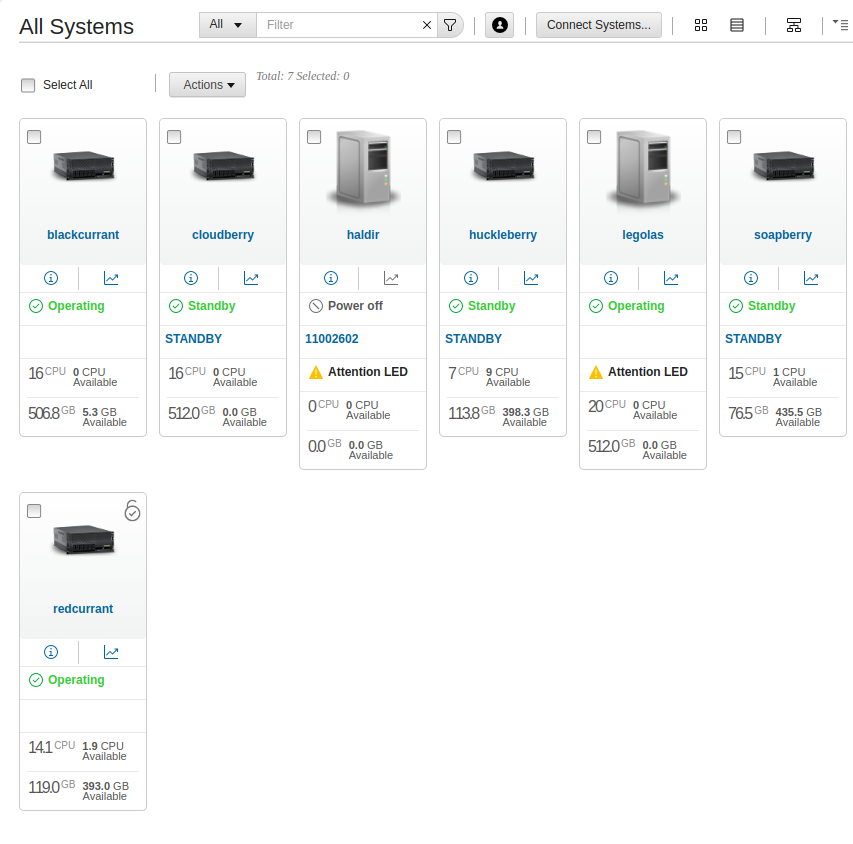

following qe machines have been packed into boxes which will be picked up by proact (moving company) tomorrow (31-08-23) and transferred to prague.

- whale

- QA-power8-3

- grenache

- cloudberry

- soapberry

- huckleberry

- redcurrant

- blackcurrant

all machines have been moved in racktables into a "transit-rack" -> https://racktables.suse.de/index.php?page=rack&rack_id=24497

Updated by okurz 11 months ago

gschlotter provided me credentials for a new HMC. I logged in as "hscroot" and setup accounts for everybody that was already existing on powerhmc3.arch.suse.de including "okurz" and "openqa" and to all human operators I send a private message with

The new LSG-QE PRG2 PowerVM HMC is ready. Please login once to https://10.145.14.33 and change your password. username: $username . password: $password

After that though I changed the accounts to use LDAP authentication so the password specified is not necessary. I also needed to enable the remote web access checkbox in user properties.

gschlotter will provide an FQDN. Two machines are included haldir and legolas but authentication is not accepted so far.

I asked in https://suse.slack.com/archives/C02CANHLANP/p1693985622275479

(Oliver Kurz) who knows HMC credentials for haldir or legolas and can try to establish the HMC connection details in the new LSG-QE PRG2 HMC https://10.145.14.33 ?

Updated by okurz 10 months ago

This topic was brought up again in weekly QE sync due to the long job schedule and many jobs scheduled for PowerVM which could not be executed for multiple weeks now.

I wrote in https://suse.slack.com/archives/C04MDKHQE20/p1694589332264709 #dct-migration

@here second wave PowerPC machines moved to PRG have been shut down 2023-08-23 and are not usable since then. My expectation for a migration period was days, it's becoming weeks if not months now. Can we clarify the expectations and priorizations here?

and referenced that in https://suse.slack.com/archives/C02CANHLANP/p1694589512949609 #eng-testing

@here As mentioned in the weekly QE sync call: The openQA job schedule for PowerVM tests is still long and can not be worked on due to all related ressources in migration to the PRG2 datacenter. I would have expected related ressources to be provided sooner which did not happen so I brought up the topic to clarify in #dct-migration https://suse.slack.com/archives/C04MDKHQE20/p1694589332264709

Updated by okurz 10 months ago

- Related to action #130585: Upgrade o3 workers to openSUSE Leap 15.5 added

Updated by okurz 10 months ago

- Due date set to 2023-10-03

- Status changed from Workable to Feedback

- Assignee set to okurz

I configured LDAP over "Users and Security" -> "Systems and Console Security" -> "Manage LDAP", taking over settings from powerhmc1 as provided by msuchanek in https://suse.slack.com/archives/C04MDKHQE20/p1695108121977439?thread_ts=1694589332.264709&cid=C04MDKHQE20

- primary URI: ldaps://ldap.suse.de

- backup URI: ldaps://ldap1.suse.de

- attribute for user login: uid

- name tree: ou=accounts,dc=suse,dc=de

Then tried login in private window

@Lazaros Haleplidis On login attempt it seems like there is no response from the system. Could it be that the connection from 10.145.14.33 to the LDAP server ldaps://ldap.suse.de and/or ldaps://ldap1.suse.de is blocked by firewall?

Updated by okurz 10 months ago

Apparently the expectation is that we fully administrate the HMC including the network which will prove tricky as we don't have access to some components of the network.

But regardless in the HMC in Console Management -> Console Settings -> Change Network Settings -> LAN Adapters I found that the HMC is running a DHCP server on eth1, range 10.255.255.2 - 10.255.255.254. I ran a discovery to lookup machines but after 1m that finished and showed no machines "No systems have been found in the range of IP addresses specified.".

Michal Suchanek noted:

Does not sound like a great choice given our internal network also uses these addresses. It can pose some routing challenges when trying to reach the managed machines

Regardless of the HMC I wrote in https://suse.slack.com/archives/C04MDKHQE20/p1695112259111409?thread_ts=1694589332.264709&cid=C04MDKHQE20

(Oliver Kurz) @John Ford @Moroni Flores ok, regardless of the HMC setup what are plans regarding DHCP/DNS for the PowerPC systems if any? As racktables are mostly missing MAC addresses and have no updated IP address entries and as I don't have access to the switches even I don't think it is feasible to expect from us to set this up as well.

Updated by okurz 10 months ago

Worked on this with nicksinger. Over the HMC one can connect to the ASM with the HMC acting as kind of a jump host. To do that we clicked in the HMC web interface on the system, then "System Actions -> Operations -> Launch Advanced System Management" and used our awesome social engineering skills to authenticate in the ASM. In the ASM "Login Profile -> Change Password" and change the password for user "HMC", e.g. to password "admin". And then in the HMC "System Actions -> Operations -> Update System Password"

And wrote in https://suse.slack.com/archives/C04MDKHQE20/p1695125093587689?thread_ts=1694589332.264709&cid=C04MDKHQE20

(Oliver Kurz) Can somebody please power on huckleberry, soapberry, blackcurrant, redcurrant, cloudberry so that we can try to connect them in the HMC?

Updated by dawei_pang 10 months ago

I am able to access HMC webUI and SSH https://10.145.14.33/ by LDAP account, successfully power on legolas and its partition PARID=2.

Previously, the first port 98:be:94:07:3f:c4 can get IP address from DHCP and be used for PXE boot.

Now I set all ports as dhcp, eth2 and eth3 are up but unable to get IP address automatically.

localhost:~ # yast lan list

id name bootproto

0 eth0 dhcp

1 eth3 dhcp

2 eth1 dhcp

3 eth2 dhcp

localhost:~ # ip addr show

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc mq state DOWN group default qlen 1000

link/ether 98:be:94:07:3f:c4 brd ff:ff:ff:ff:ff:ff

altname enP19p96s0f0

3: eth1: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc mq state DOWN group default qlen 1000

link/ether 98:be:94:07:3f:c5 brd ff:ff:ff:ff:ff:ff

altname enP19p96s0f1

4: eth2: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 98:be:94:07:3f:c6 brd ff:ff:ff:ff:ff:ff

altname enP19p96s0f2

inet6 fe80::9abe:94ff:fe07:3fc6/64 scope link

valid_lft forever preferred_lft forever

5: eth3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 98:be:94:07:3f:c7 brd ff:ff:ff:ff:ff:ff

altname enP19p96s0f3

inet6 fe80::9abe:94ff:fe07:3fc7/64 scope link

valid_lft forever preferred_lft forever

Updated by okurz 10 months ago

- Status changed from Feedback to Blocked

@dawei_pang please create separate tickets for all issues concerning machines after you can already reach them like the above issue.

Other topics:

hrommel+mpluskal asked about whale.qam.suse.de . According to https://racktables.nue.suse.com/index.php?page=object&object_id=9594 that machine is in transition and is blocked by https://jira.suse.com/browse/ENGINFRA-2501 about missing rails to mount the server

We can track the package shipping to PRG2 DC in

https://www.tnt.com/express/en_rs/site/shipping-tools/tracking.html?cons=239240550&searchType=CON&source=share&utm_source=email&utm_campaign=shipment&utm_medium=email&utm_content=en_rs&tnt_urv=&tnt_urt=sfmc_id

Updated by okurz 10 months ago

- Related to action #136121: Repurpose PowerPC hardware in FC Basement - deagol added

Updated by dawei_pang 10 months ago

okurz wrote in #note-24:

@dawei_pang please create separate tickets for all issues concerning machines after you can already reach them like the above issue.

Thanks Oliver, I created a new ticket for the issue: https://progress.opensuse.org/issues/136124

Updated by livdywan 10 months ago

We can track the package shipping to PRG2 DC in

https://www.tnt.com/express/en_rs/site/shipping-tools/tracking.html?cons=239240550&searchType=CON&source=share&utm_source=email&utm_campaign=shipment&utm_medium=email&utm_content=en_rs&tnt_urv=&tnt_urt=sfmc_id

State says "Collecting" so maybe it should arrive this week

Updated by okurz 10 months ago

- Due date deleted (

2023-10-03)

Still blocked on https://jira.suse.com/browse/ENGINFRA-2501

Updated by livdywan 9 months ago

okurz wrote in #note-28:

Still blocked on https://jira.suse.com/browse/ENGINFRA-2501

Said ticket is (still) blocking on https://jira.suse.com/browse/ENGINFRA-2009 which was updated on 2023-10-16

Updated by okurz 9 months ago

Created https://jira.suse.com/browse/ENGINFRA-3206 "Provide PDU administrative access to LSG QE Tools for PDU-A-J11 in rack J11" as discussed in weekly DCT migration call to help Eng-Infra to help ourselves

Updated by mgriessmeier 9 months ago

- File clipboard-202310271737-fezwr.png added

so I've got the following private slack message from mflores:

machines are powered up, hmc connected, vlan deployed

xe-0/0/41 up up huckleberry.arch.suse.de - mgmt2

xe-0/0/42 up up redcurrent.arch.suse.de - mgmt2

xe-0/0/43 up up blackcurrent.arch.suse.de - mgmt1

xe-0/0/44 up up redcurrent.arch.suse.de - mgmt2

xe-0/0/45 up up cloudberry.arch.suse.de - mgmt2

I have asked mflores to communicate this in #dct-migration

whatever that means, if someone wants to try out to discover something or follow up on this, feel free :)

(I quickly tried to discover some machines within the hmc in the known range 10.145.14.x without luck)

Updated by mgriessmeier 9 months ago

- File deleted (

clipboard-202310271737-fezwr.png)

Updated by mgriessmeier 9 months ago

with huge help of Conrad Bachmann who was checking the switch configurations and brought the machines into the correct VLANs, @nicksinger and myself were able to discover 5 machines in the powerHMC and connect their management ports

Updated by okurz 9 months ago

- Copied to action #139109: Support move of non-openQA PowerPC machines to PRG2, i.e. haldir, legolas, whale, blackcurrant, cloudberry, huckleberry, soapberry, nessberry added

Updated by okurz 9 months ago

- Copied to action #139112: Ensure OSD openQA PowerPC machine grenache is operational from PRG2 added

Updated by okurz 9 months ago

- Description updated (diff)

- Due date set to 2023-11-25

- Status changed from Blocked to Feedback

https://jira.suse.com/browse/ENGINFRA-2009 resolved.

I split out some tasks to make further steps easier to execute and also to allow independant execution:

I marked all completed tasks from the ticket description with "DONE", waiting for results from pcervinka and acarvajal looking into PowerPC machines as part of https://suse.slack.com/archives/C02CANHLANP/p1699017470325589

Updated by okurz 8 months ago

Meeting with jford, mflores, cbachman, gsouza. Meeting minutes to be provided by jford (TBC). As followup from https://suse.slack.com/archives/C04MDKHQE20/p1699451342346359

(Oliver Kurz) @John Ford @Moroni Flores @Conrad Bachman based on the meeting we just had about pending tasks for PowerPC setup (CC @Matthias Griessmeier @Dawei Pang @Alvaro Carvajal) as we talked about freeing rackspace in J11 and related to https://jira.suse.com/browse/ENGINFRA-2908 given that there is still the hardware "H03-ch1" in https://racktables.nue.suse.com/index.php?page=object&object_id=23373 which is currently unused I don't expect new hardware in this rack planned for the next months. Maybe the easiest would be to leave all machines where they are currently mounted but only if you are able to provide a "QE (not-openQA)" network in PRG2-J11. Otherwise I would suggest to move haldir and legolas. Can you state if the QE network can be provided to selected machines in J11 plus connection to a separate QE PowerVM HMC?

(Moroni Flores) we can provide the QE network to those machines, and a separate HMC - but let me reiterate here that the arch network will not be available in this rack

(Oliver Kurz) Understood. So far I know of no requirement for arch network. Given that I suggest we keep all already racked machines in place.

Updated by okurz 8 months ago

- Related to action #139199: Ensure OSD openQA PowerPC machine redcurrant is operational from PRG2 size:M added

Updated by okurz 8 months ago

I reviewed all entries in https://racktables.nue.suse.com/index.php?page=rack&rack_id=21278 and have put a proper "common name" and FQDN to separate openQA and QE (non-openQA) machines.

Updated by okurz 8 months ago

https://suse.slack.com/archives/C02CANHLANP/p1700039047989109

(Oliver Kurz) @Petr Cervinka any news regarding https://suse.slack.com/archives/C02CANHLANP/p1699017470325589 ?

(Petr Cervinka) We had call this week people and we found out that it is more broken than it looks and we need to find time for more troubleshooting later this week.

Besides that there is no further work planned by me right now.

Updated by okurz 6 months ago

minor change to use new FQDN for HMC

https://gitlab.suse.de/openqa/salt-pillars-openqa/-/merge_requests/708

but still mostly blocked by the other referenced cards.

Updated by okurz 6 months ago · Edited

- Status changed from Blocked to In Progress

- Target version changed from future to Ready

https://gitlab.suse.de/openqa/salt-pillars-openqa/-/merge_requests/708 merged. Additional meeting with multiple people. Now providing more details to various Jira cards as requested.

While thinking about checking more PowerVM instances which are currently connected to HMC I looked for some more documentation and found a concise overview

http://www.unixwerk.eu/aix/hmc-howto.html

With that I learned for example how to add ssh public keys for authentication so I did mkauthkeys -u okurz -a "ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAILAtWUGdPW5LO1rMqVULy0VWKJ4ba+y2uglpi3gaZvuB okurz@linux-28d6.suse" which now allows me to login with SSH key.

Updated by okurz 6 months ago

As not much happened in the past days regarding Eng-Infra working on our requests I asked again today in https://suse.slack.com/archives/C04MDKHQE20/p1706086589323479 about progress, for another ticket, but still related regarding managing expectations

Question about https://jira.suse.com/browse/ENGINFRA-3685 . It has priority "urgent" and it says "Active Sprint: EngInfra Sprint 37 ends 2024-01-30". So how likely is it that this card will be picked up, not even saying completed, only picked up until 2024-01-30?

Updated by okurz 6 months ago

I was addressed by Jacek Rybicki in Slack DM. I put my notes from that discussion into https://jira.suse.com/browse/ENGINFRA-3551#comment-1321979

Updated by okurz 6 months ago · Edited

- Status changed from In Progress to Blocked

Some minor progress by Eng-Infra but not much, switching to "Blocked", currently primarily on

- https://jira.suse.com/browse/ENGINFRA-3764 "Ensure a PRG2 based QE PowerPC HMC is reachable over proper FQDN and reverse PTR"

- DONE https://jira.suse.com/browse/ENGINFRA-3751 "Connect and setup redcurrant.oqa.prg2.suse.org"

secondary other cards from https://jira.suse.com/issues/?jql=project%20%3D%20%22Engineering%20Infra%20%22%20AND%20labels%20%3D%20QE-LSG%20ORDER%20BY%20priority%20DESC

Updated by okurz 3 months ago

- Related to action #159048: Setup new Power10 machine for QE LSG in PRG2 (S/N 7882391) added

Updated by okurz about 1 month ago

https://jira.suse.com/browse/ENGINFRA-3206 progressed and we have remote control access for PDUs in PRG2-J11 which I now included in https://wiki.suse.net/index.php/SUSE-Quality_Assurance/QE_infrastructure#PRG2 . https://jira.suse.com/browse/ENGINFRA-3764 still open.

Updated by okurz about 1 month ago

- Status changed from Blocked to Resolved

- Target version changed from Tools - Next to Ready

AC1 is fulfilled as in all production machines are now usable including blackcurrant which isn't even used for openQA itself, see #153724 . There are additional PowerPC resources which are not currently in use as the former users freed the machine. Those tasks will be followed in #160520