action #137804

closedcoordination #137714: [qe-core] proposal for new tests that covers btrfsmaintenance

[qe-core] btrfsmaintenance - test btrfs balance on large disks

10%

Description

Balance¶

This test needs at least 2 extra block devices, which can be separate (virtual) disks or partitions of the same disk.

Create a raid0 device from 1 x 5Gb disks:

# mkfs.btrfs -d raid0 -m raid0 /dev/vdb

# mount /dev/vdb /mnt/raid

# df -h | grep raid

/dev/vdb 5.0G 3.4M 4.8G 1% /mnt/raid

check statistics:

# btrfs filesystem df /mnt/raid

Data, RAID0: total=512.00MiB, used=64.00KiB

Data, single: total=512.00MiB, used=256.00KiB

System, RAID0: total=8.00MiB, used=0.00B

System, single: total=32.00MiB, used=16.00KiB

Metadata, RAID0: total=256.00MiB, used=112.00KiB

Metadata, single: total=256.00MiB, used=0.00B

GlobalReserve, single: total=3.25MiB, used=0.00B

WARNING: Multiple block group profiles detected, see 'man btrfs(5)'.

WARNING: Data: single, raid0

WARNING: Metadata: single, raid0

WARNING: System: single, raid0

create a big binary file

# time dd if=/dev/random of=/mnt/raid/bigfile.bin bs=4M count=1024

1024+0 records in

1024+0 records out

4294967296 bytes (4.3 GB, 4.0 GiB) copied, 46.4441 s, 92.5 MB/s

real 0m46.445s

user 0m0.000s

sys 0m45.580s

check statistics to see disk usage

# btrfs device usage /mnt/raid/

/dev/vdb, ID: 1

Device size: 5.00GiB

Device slack: 0.00B

Data,single: 3.94GiB

Data,RAID0/1: 512.00MiB

Metadata,single: 256.00MiB

Metadata,RAID0/1: 256.00MiB

System,single: 32.00MiB

System,RAID0/1: 8.00MiB

Unallocated: 21.00MiB

notice the difference between free space reported by 'df' and unallocated space reported by btrfs:

# df -h | grep -E '(raid|Filesystem)'

Filesystem Size Used Avail Use% Mounted on

/dev/vdb 5.0G 4.1G 470M 90% /mnt/raid

# btrfs filesystem df /mnt/raid/

Data, RAID0: total=512.00MiB, used=448.50MiB

Data, single: total=3.94GiB, used=3.56GiB

System, RAID0: total=8.00MiB, used=0.00B

System, single: total=32.00MiB, used=16.00KiB

Metadata, RAID0: total=256.00MiB, used=4.33MiB

Metadata, single: total=256.00MiB, used=0.00B

GlobalReserve, single: total=3.80MiB, used=0.00B

WARNING: Multiple block group profiles detected, see 'man btrfs(5)'.

WARNING: Data: single, raid0

WARNING: Metadata: single, raid0

WARNING: System: single, raid0

now add a new disk device to the raid0:

# btrfs device add -f /dev/vdc /mnt/raid/

# btrfs device usage /mnt/raid/

/dev/vdb, ID: 1

Device size: 5.00GiB

Device slack: 0.00B

Data,single: 3.94GiB

Data,RAID0/1: 512.00MiB

Metadata,single: 256.00MiB

Metadata,RAID0/1: 256.00MiB

System,single: 32.00MiB

System,RAID0/1: 8.00MiB

Unallocated: 21.00MiB

/dev/vdc, ID: 2

Device size: 5.00GiB

Device slack: 0.00B

Unallocated: 5.00GiB

the aggregate filesystem now is larger, but the available space does not reflect the new size, because the filesystem is *unbalanced * ; we need to trigger balance of the filesytem. The next operation is I/O intensive :

# df -h | grep -E '(raid|Filesystem)'

Filesystem Size Used Avail Use% Mounted on

/dev/vdb 10G 4.1G 490M 90% /mnt/raid

# btrfs balance start --full-balance -v /mnt/raid

now free space is available:

# df -h | grep raid

Filesystem Size Used Avail Use% Mounted on

/dev/vdb 10G 3.6G 6.3G 37% /mnt/raid

Here we can either force the balance, or just "start" it and let run in background, using the script in the maintenance package called /usr/share/btrfsmaintenance/btrfs-balance.sh

Acceptance Criteria¶

- AC1: BTRFS balance test is scheduled in Staging and product in development for SLES and ALP

- AC2: A good performance baseline is established, so we know when a bug or other defect hinders the performance.

Notes¶

- Contact the Kernel team to ask for an isci disc or similar with 100GB or better 1TB for these tests to be ran

- See documentation for backends at: https://github.com/os-autoinst/os-autoinst/blob/master/doc/backend_vars.asciidoc

- The objective is to test the functionality of the balance tools provided by the package rather than testing the tool from a performance perspective; we're looking to avoid scenarios where tests are failing due to

- stability of the test is proven by scheduling multiple test runs with different disk types and configurations, preferably using disks attached over the network via Netapp or something similar.

Files

Updated by amanzini over 1 year ago

My 2c:

if the objective of the test is to test the pure btrfs-balance functionality, e.g. start with an "unbalanced" setup and then rebalance it, the test might be done as well with "small" (20GB) disk, and be scheduled without dedicate hardware, something like we already do for RAID1 setup. Bigger disks may be required to better reflect the customer experience and reason about performance / system load during the balance operation.

Updated by amanzini about 1 year ago

- Assignee set to amanzini

need to clarify scheduling on bare metal or iscsi-attached storage for bigger disk sizes; in the meantime for development will go forward with virtual disks

Updated by szarate about 1 year ago

- Sprint changed from QE-Core: October Sprint 23 (Oct 11 - Nov 08) to QE-Core: December Sprint 23 (Dec 13 - Jan 10)

- Tags changed from qe-core-october-sprint to qe-core-october-sprint, qe-core-december-sprint

Updated by amanzini about 1 year ago

- Status changed from Workable to In Progress

Updated by amanzini about 1 year ago

some early notes:

by default /etc/sysconfig/btrfsmaintenance only balance "/" , need to change it to "auto" or point to our raid0 volume

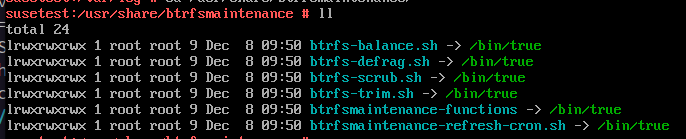

on 15SP6 seems the maintenance scripts are just symlinks to /bin/true ? :

need to check on older versions

Updated by amanzini about 1 year ago

- Status changed from In Progress to Feedback

- % Done changed from 0 to 10

Updated by amanzini about 1 year ago

as the test requires NUMDISKS=3 , need some clarification on where and how to schedule it

Updated by amanzini about 1 year ago

comment about scheduling on functional ? https://github.com/os-autoinst/os-autoinst-distri-opensuse/pull/18348#issuecomment-1867334240

criteria says

AC1: BTRFS balance test is scheduled in Staging and product in development for SLES and ALP

Updated by szarate about 1 year ago · Edited

amanzini wrote in #note-10:

comment about scheduling on functional ? https://github.com/os-autoinst/os-autoinst-distri-opensuse/pull/18348#issuecomment-1867334240

criteria says

AC1: BTRFS balance test is scheduled in Staging and product in development for SLES and ALP

Functional is Product in development (there's one for each codestream of ALP, SLES), in case staging hasn't been enabled yet... lets leave it out for now, but do create a ticket about it for the future

Updated by amanzini about 1 year ago

Updated by szarate about 1 year ago

- Related to action #40163: [core][aarch64][s390x] test fails in btrfs_qgroups - needs to be scheduled on two-disk-machine. added